Tokens, Context Windows, and How to Count Them (With Real Examples You Can Run Today)

Categories: AI

If you spend enough time playing with large language models, you eventually meet two quiet troublemakers: tokens and context windows.

They don’t shout. They don’t glow. But they decide how much you can send to an AI model, how much it can return, and sometimes how much you pay.

Let’s break everything down in a friendly, human way—and then I’ll show you exactly how to count tokens on your own computer using OpenAI, Gemini, and Claude.

What Exactly Is a Token?

Think of tokens as the small pieces that models read and write—like Lego bricks for language.

A token is not always a full word.

Sometimes it’s a whole word (“dog”),

sometimes part of a word (“run”, “ning”),

sometimes punctuation (“.”).

Models don’t read sentences.

They read tokens.

When you send a prompt, it gets chopped into hundreds or thousands of tokens.

The model processes them, generates more tokens, and sends those back.

So if an LLM feels slow, expensive, or stops responding halfway…

you’re usually looking at a token problem.

What Is a Context Window?

Every AI model has a maximum number of tokens it can hold in memory at once.

That limit is called the context window.

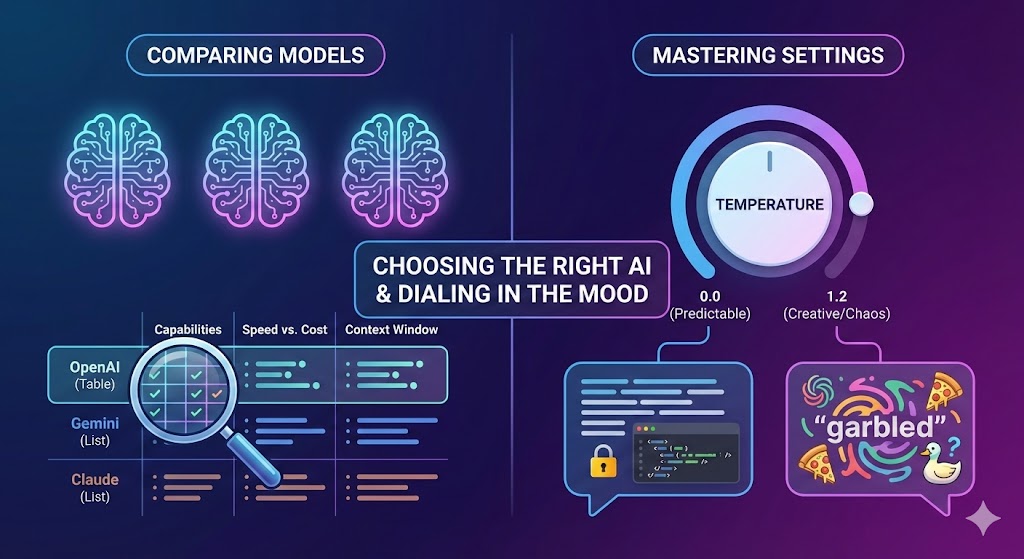

For example:

-

GPT-4o → ~128k tokens

-

Gemini 2.5 Flash → very large window (hundreds of thousands)

-

Claude Sonnet 3.5 → 200k tokens

Think of it like a desk:

-

A small desk fits a notebook.

-

A big desk fits textbooks, a laptop, and maybe a half-assembled drone.

A larger context window lets the model:

-

read longer documents

-

remember earlier parts of a conversation

-

handle bigger codebases

-

summarize entire PDFs

-

maintain complex multi-step reasoning

But even with huge windows, all prompts + all responses must fit inside.

Tokens matter. Context matters.

Now let’s learn how to count them yourself.

How to Count Tokens on Your Computer

Below are real, working examples for the three major LLM ecosystems:

-

OpenAI (ChatGPT / GPT-4o)

-

Google Gemini (2.5 Flash, 2.5 Pro)

-

Anthropic Claude (Sonnet / Opus / Haiku)

Everything shown works locally on Windows, macOS, or Linux.

1. Counting Tokens with OpenAI (Using tiktoken)

OpenAI gives us a fast offline tokenizer called tiktoken.

You don’t need an API key to use it. It works fully offline.

Install it:

pip install tiktoken |

Create a file: token_test.py

|

|

Run it:

python token_test.py |

✅ Example Output — OpenAI (tiktoken)

|

|

This tells you exactly how OpenAI models will tokenize your input—even before you send anything to the API.

Perfect for estimating cost, cleaning prompts, or chunking large documents.

2. Counting Tokens with Google Gemini (Using API Usage Metadata)

Unlike OpenAI, Google doesn’t provide a standalone tokenizer.

Instead, you can count tokens after an API call by reading usage_metadata.

Install Gemini SDK:

pip install google-genai |

Set your API key (Windows PowerShell):

For Windows

|

|

For Linux

| export GEMINI_API_KEY="your_key_here" # for linux |

(After the command close and then open a new terminal.)

Create gemini_test.py:

|

|

Run it:

python gemini_test.py |

✅ Example Output — Google Gemini (usage_metadata)

|

|

Gemini returns token usage like:

prompt_token_count candidates_token_count total_token_count

These numbers tell you exactly how many tokens you consumed for billing and context.

3. Counting Tokens with Claude (Using messages.count_tokens)

Claude gives you both:

-

API token usage (after a request)

-

Pre-counting tokens (before a request!)

Very helpful when planning large workloads.

Install Anthropic SDK:

pip install anthropic |

Set your API key:

For Windows

setx ANTHROPIC_API_KEY "your_key_here" |

For Linux

| export ANTHROPIC_API_KEY="your_key_here" # for linux |

(After the command close and then open a new terminal.)

A) Count tokens after sending a message

Create claude_test.py:

|

|

Run:

python claude_test.py |

✅ Example Output — Claude (token usage + count_tokens)

|

|

B) Count tokens before sending (Claude’s pre-counter)

Create claude_count.py:

|

|

This is priceless when:

-

splitting long documents

-

debugging token overflows

-

estimating cost ahead of time

-

designing multi-step workflows

Claude’s token API is one of the cleanest in the industry.

Why Tokens and Context Windows Matter

Tokens matter because they determine:

1. Cost

You pay per token for most APIs.

2. Speed

Longer prompts → more tokens → slower responses.

3. Model Memory

The model cannot exceed its max context window.

4. Prompt Engineering Quality

Good prompt design = fewer tokens + clearer instructions.

5. Chunking & Summarization

If you feed large documents, you must split them into token-safe chunks.

Understanding tokens is like understanding fuel in a car:

you drive better when you know the limits.

A Quick Summary Table

| Model | Offline Token Counting | After-Request Token Usage | Pre-Request Token Check | Difficulty |

|---|---|---|---|---|

| OpenAI GPT-4o | ✔ tiktoken |

✔ Yes | ✔ Via tiktoken | Easiest |

| Gemini 2.5 | ❌ No official | ✔ usage_metadata |

❌ No official | Medium |

| Claude 3.5 | ❌ No offline tools | ✔ Yes | ✔ messages.count_tokens |

Very easy |

In Plain English

-

OpenAI → great offline tools

-

Gemini → count tokens from API

-

Claude → count tokens before and after API calls

And now you can do all three on your own machine.

Conclusion

Tokens and context windows aren’t scary—they’re predictable.

Once you know how many tokens you're working with, everything becomes easier:

-

prompts behave consistently

-

costs become manageable

-

large documents become tractable

-

failures become debuggable

Comments (0)