How to Compare LLM Models (and Why Temperature Can Make AI Talk Like a Philosopher on Energy Drinks)

Categories: AI

Choosing the right AI model these days feels a bit like choosing a laptop.

Everyone claims they’re “the fastest,” “most intelligent,” or “best for developers,” but once you dig in, the specs start blending into alphabet soup.

So today, let’s break things down — the human way.

I’ll walk you through how to compare LLM models, why OpenAI gives you a neat comparison page while Gemini and Claude do not, and what happens when you turn up the temperature and the AI suddenly starts talking like your overly creative friend.

Grab a cup of tea… this one’s fun.

Why We Compare LLM Models in the First Place

Every LLM is basically a different brain with different strengths. Some are quick thinkers. Some are logic-heavy. Some are poetry-loving weirdos. And some are built for automation tools like n8n where speed matters more than philosophical accuracy.

When we compare models, we mainly look at:

-

Capabilities – reasoning, coding, creativity

-

Speed vs cost – fast brains cost more

-

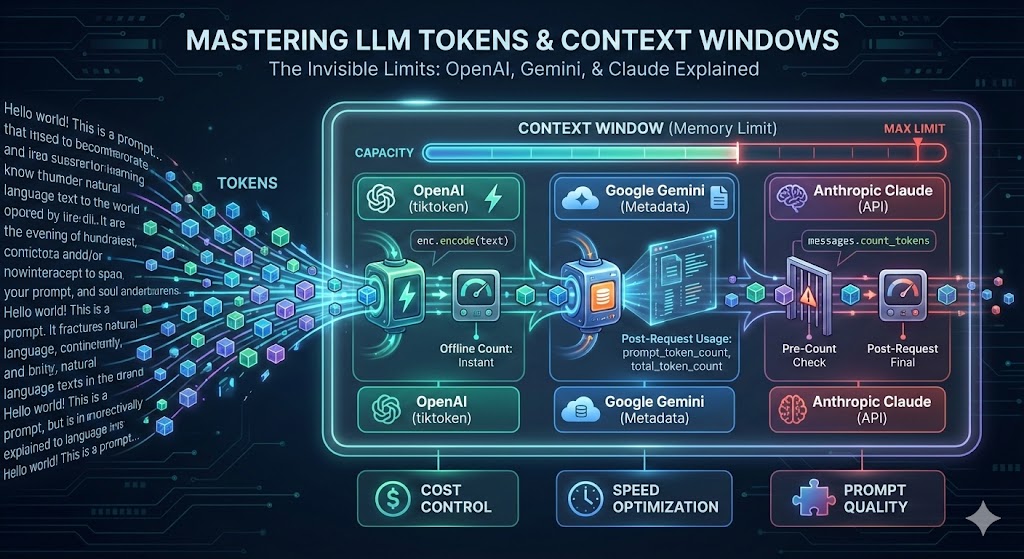

Context window – how much info the model can “remember”

-

Multimodality – can it see images? read audio? analyze files?

-

Reliability – does it hallucinate? does it stay on track?

The goal is not to pick “the smartest model.”

The goal is to pick the right model for the right job.

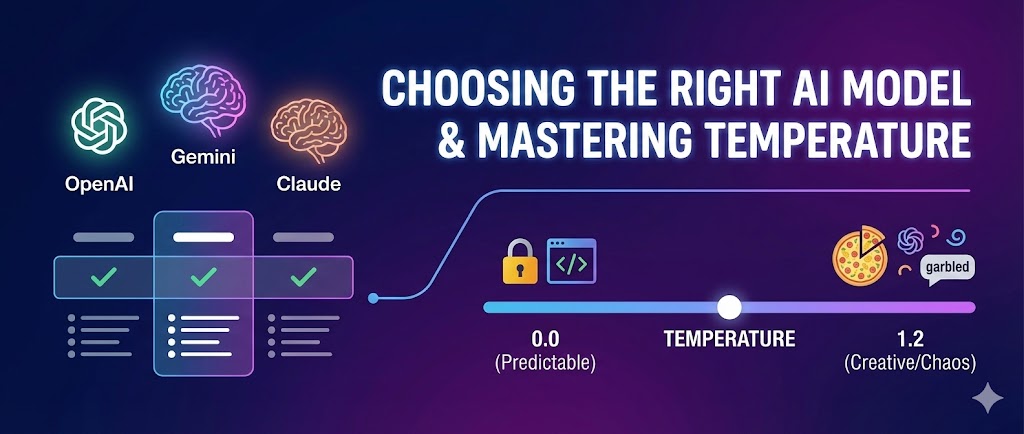

OpenAI Is Currently the Only One With a Clean Comparison Page

OpenAI offers a tidy table where you can compare models side-by-side:

https://platform.openai.com/docs/models/compare

It’s simple, visual, and tells you things like:

-

Which model is best at reasoning

-

Which is cheapest

-

Which is fastest

-

Which supports vision and audio

-

Which one you can use for structured JSON

It’s honestly one of the most developer-friendly tools they’ve ever built.

Gemini and Claude Don’t Have a Comparison Page — Here’s Why

Both Google (Gemini) and Anthropic (Claude) give you model lists, but no side-by-side comparison table like OpenAI.

Why?

Because their model families are smaller and simpler.

Claude models:

-

Claude 3.5 Haiku

-

Claude 3.5 Sonnet

-

Claude 3.5 Opus

That's basically:

Fast → Balanced → Smartest.

Gemini models:

-

Gemini 2.0 Flash

-

Gemini 2.0 Pro

-

A few legacy versions

Again:

Fast → Powerful.

Their documentation describes capabilities, but not in a visual comparison grid.

OpenAI is still the only one making that developer-friendly experience.

Model Settings: Why We Change Temperature, Top-P, Max Tokens & More

Once you choose a model, you still need to tell it how to behave.

Think of model settings like mood sliders for the AI.

Here are the key ones:

Temperature → Creativity

Low temperature = safe, predictable, accurate.

High temperature = creative, risky, surprising.

Top-P → Diversity of choices

Similar to temperature, but controls how wide the model’s vocabulary net is.

Max tokens → Response length

Prevents novel-length answers when you only wanted a paragraph.

Seed → Reproducibility

Same seed = same output.

Useful for testing and comparing models.

Real-Life Example: Why High Temperature Sometimes Produces Garbage

This is the part everyone learns the hard way.

Scenario: You ask,

“How do I get to the train station?”

Temperature 0.1 — Accurate but boring

“Walk straight, turn left, and continue until you reach the station.”

Temperature 0.7 — Still helpful, more human

“Walk straight for five minutes, turn left at the bakery, and you’ll see the station after the park.”

Temperature 1.2 — Chaos mode

“Oh, the train station! Walk… or maybe don’t? Follow your heart. If you pass a giant bronze duck, you’ve gone too far but also not far enough.”

See?

Higher temperature = more freedom → more creativity → more nonsense.

This is why we never use high temperatures for code, math, or cybersecurity tasks.

The last thing you need is a firewall rule written in “creative mode.”

Another Real-Life Example: Pizza Ordering

Temperature 0.1: “Order Margherita.”

Temperature 0.7: “Pepperoni with extra cheese—can’t go wrong.”

Temperature 1.2: “Half spicy chicken, half pineapple, drizzle with honey, stuffed crust… let’s get weird.”

High temperature doesn’t make AI smarter.

It makes it less predictable.

And unpredictability can be fun or disastrous, depending on the task.

The Simple Rule

Here’s how I decide temperature in my workflows and apps:

-

0.0 – 0.3: coding, cybersec, CLI commands, instructions

-

0.4 – 0.7: explanations, tutorials, blogging

-

0.8 – 1.2: creative writing, story ideas, brainstorming

The right temperature matters just as much as the right model.

Final Thoughts

Comparing LLMs is a lot like choosing team members for a project.

One is the strategist, one is the speedster, one is the creative thinker.

OpenAI makes comparison easy with a dedicated comparison hub.

Gemini and Claude don’t — not because they can’t, but because their model families are smaller and easier to describe without a comparison table.

And temperature?

It’s not just a setting.

It’s the difference between getting a clean, logical answer…

and getting a response that sounds like someone who drank three cups of espresso and started improvising life advice.

Use it wisely.

Comments (0)