How I Built a Custom Text-to-Audio Node for n8n Workflows (With Claude AI's Help)

Categories: n8n

## TL;DR

I transformed my n8n news workflows from sending plain text to delivering audio messages in Telegram. What started as a simple idea—"I want to listen to news instead of reading it"—became a fascinating journey into building custom n8n integrations, solving production-scale challenges, and discovering how Claude AI can be a powerful development partner. The result? A production-ready, locally-hosted text-to-speech service handling multiple concurrent workflows, all without exposing any sensitive data or relying on expensive third-party APIs.

---

## The Problem: Information Overload in Text Form

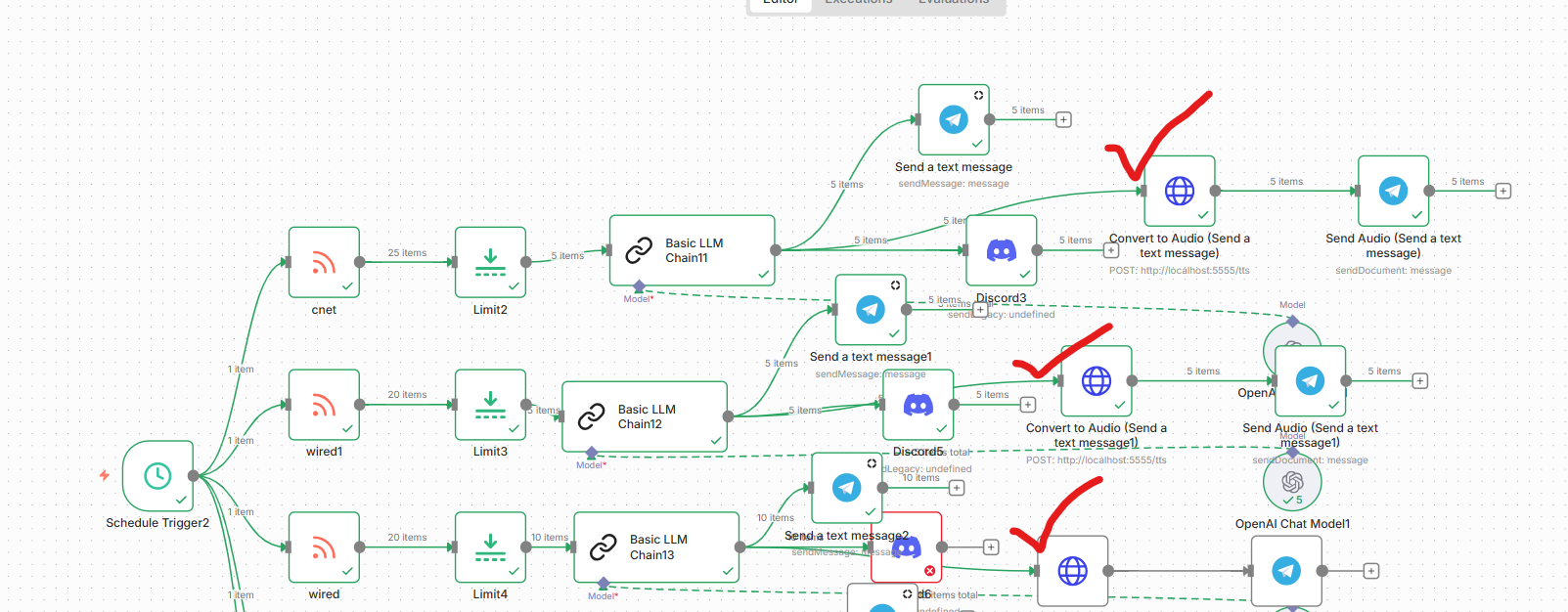

I run four automated news workflows in n8n:

- **Daily News** - General news headlines

- **Latest Trends** - Trending topics across various categories

- **Cyber Security** - Security vulnerabilities and threats

- **Tech News** - Technology updates and developments

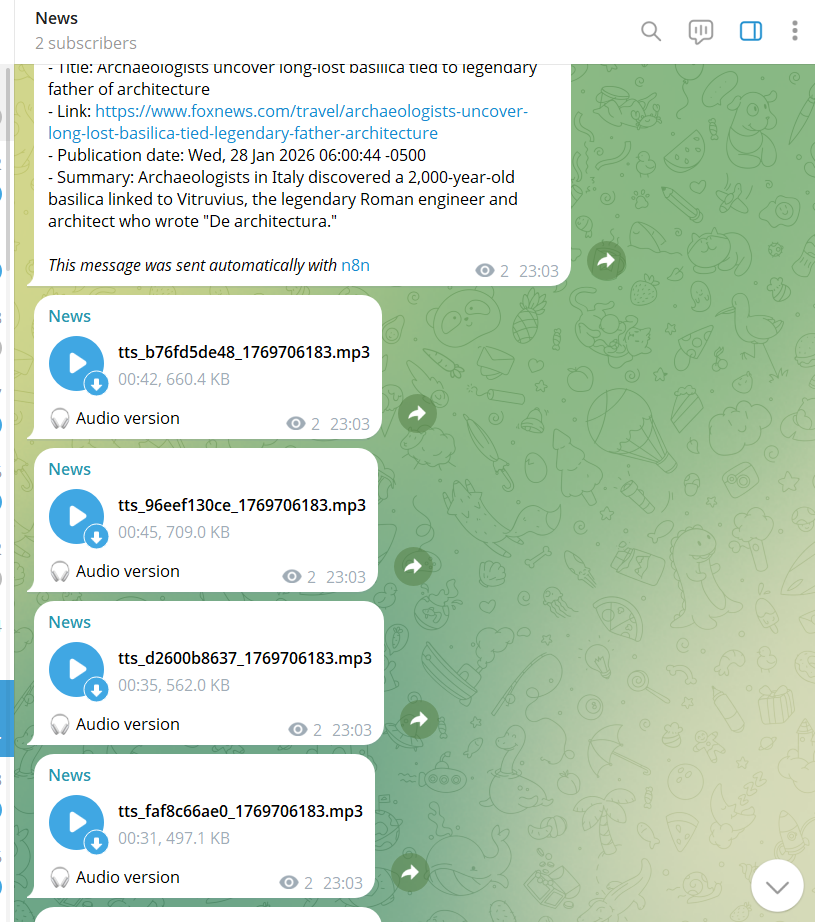

Each workflow uses Brave Search and AI (Grok) to curate personalized news summaries and sends them to my private Telegram channel. It worked perfectly—except for one thing: **I was drowning in text messages**.

Reading through multiple paragraphs of dense information while commuting or doing other tasks wasn't practical. I thought: *"What if I could just listen to these updates instead?"*

That simple question led to an unexpected adventure in automation, AI-assisted development, and production engineering.

---

## The Vision: Custom Text-to-Audio Integration

n8n doesn't have a built-in text-to-speech node. Sure, you could use Google Cloud TTS, AWS Polly, or Microsoft Azure—but:

- They cost money (especially at scale)

- They require API keys (security concerns)

- They send your data to third parties (privacy concerns)

- They have rate limits (reliability concerns)

I wanted something different:

- ✅ **Completely free** (no ongoing costs)

- ✅ **Local processing** (privacy-first)

- ✅ **Unlimited usage** (no rate limits)

- ✅ **Production-ready** (handles multiple workflows)

So I decided to build it myself. But here's where it gets interesting: **I didn't build it alone**.

---

## Enter Claude: My AI Development Partner

I've been experimenting with Claude Code (Anthropic's CLI tool) for development work. Unlike typical AI chatbots, Claude Code can:

- Read and understand entire codebases

- Execute commands and verify results

- Edit files directly

- Debug issues in real-time

- Architect complete solutions

The game-changer? **With the right prompts, Claude can build complex n8n integrations from scratch.**

Here's what I learned: The quality of your AI assistance directly correlates with how well you describe what you want. Instead of saying "make a TTS node," I explained:

> *"I need a local text-to-speech service that my n8n workflows can call via HTTP. It should convert text to MP3 format that Telegram accepts, handle multiple concurrent requests from different workflows, auto-restart if it crashes, and be production-ready for 24/7 operation."*

That specificity made all the difference.

---

## The Journey: From Concept to Production

### Phase 1: The First Prototype (January 28, 2026)

**The Initial Approach:**

Claude suggested using Microsoft's Azure TTS API. We quickly built a Flask service that accepted text and returned audio. It worked... but it violated my core requirements. It wasn't local—it was still calling an external API.

**The Pivot:**

After I clarified that I wanted *completely offline* processing, Claude researched alternatives and recommended:

- **Piper TTS** - High-quality neural voices, fully offline

- **gTTS** - Google TTS library (fallback option)

- **FFmpeg** - For audio format conversion

We rebuilt the service using these tools. This version generated audio locally without any external API calls.

---

### Phase 2: The Integration Nightmare (January 29, 2026)

Getting the TTS service to work was one thing. Making it talk to n8n and Telegram was another.

**Problem #1: Audio Format Mismatch**

- TTS service generated WAV files

- Telegram API rejected them with `404 Not Found`

- **Solution:** Added FFmpeg conversion pipeline to output MP3 files (128kbps, 22.05kHz)

**Problem #2: n8n Binary Data Handling**

- HTTP Request node returned binary data correctly

- But how do you pass that to Telegram?

- First attempt: Used wrong Telegram node configuration

- **Solution:** Changed from `resource: "file"` + `operation: "send"` to `resource: "message"` + `operation: "sendDocument"`

**Problem #3: Docker Networking**

- n8n runs in Docker container

- TTS service runs on host machine

- They couldn't communicate!

- **Solution:** Used `network_mode: host` in docker-compose (not ideal for production, but works for self-hosted setups)

**Problem #4: JSON Payload Issues**

- n8n's HTTP Request node formatting

- TTS service not parsing JSON correctly

- Multiple endpoints tried (/tts, /tts/convert, /tts/direct)

- **Solution:** Standardized on one endpoint with flexible JSON parsing

Each problem took hours of debugging. The logs showed cryptic errors. Claude would read the error logs, identify the issue, propose a fix, implement it, test it, and iterate until it worked.

---

### Phase 3: Production Hardening (January 30, 2026)

The biggest surprise came this morning. I manually tested the "news" workflow yesterday—it sent beautiful audio messages to Telegram. But today, **all workflows only sent text**.

**What happened?**

The TTS service had stopped running. When workflows couldn't reach it at `http://localhost:5555`, they silently fell back to text-only mode.

This revealed a critical gap: **The system wasn't production-ready.**

Claude and I spent the day transforming it:

**Issue #1: Single-Threaded Service**

- Flask's development server can't handle concurrent requests

- Multiple workflows hitting it simultaneously could crash it

- **Solution:** Deployed with Gunicorn (production WSGI server)

- 4 worker processes

- 2 threads per worker

- = 8 concurrent connections supported

**Issue #2: No Auto-Restart**

- If the service crashed, it stayed down

- **Solution:** Three-layer resilience:

1. **Systemd service** with `Restart=always` policy

2. **Resource limits** (1GB memory cap, prevents runaway processes)

3. **Cron watchdog** (checks health every 5 minutes, auto-restarts if down)

**Issue #3: No Auto-Start on Boot**

- Server reboots would kill everything

- **Solution:**

- TTS service: Installed as systemd service with `enable` flag

- n8n Docker: `restart: unless-stopped` policy

**Testing:**

We ran 8 concurrent TTS conversion requests to simulate all workflows firing at once:

```bash

for i in {1..8}; do

curl -X POST http://localhost:5555/tts \

-H "Content-Type: application/json" \

-d '{"text": "Concurrent test '$i'"}' \

--output test_$i.mp3 &

done

wait

```

**Result:** All 8 completed successfully in under 5 seconds. ✅

---

## The Architecture: How It All Works

### System Components

```

┌─────────────────────────────────────────────────────────────┐

│ n8n Workflow │

│ ┌─────────────┐ ┌─────────────┐ ┌─────────────┐ │

│ │ LLM Node │───▶│ Code Node │───▶│ HTTP Request │ │

│ │ (Grok AI) │ │ (Split Text)│ │ (TTS API) │ │

│ └─────────────┘ └─────────────┘ └──────┬──────┘ │

└────────────────────────────────────────────────┼────────────┘

│

┌─────────────────────────────▼─────────┐

│ TTS Service (Gunicorn) │

│ ┌─────────────────────────────────┐ │

│ │ Flask App (tts_service_v2.py) │ │

│ │ │ │

│ │ • Receives JSON: {"text":"..." │ │

│ │ • Tries gTTS (online fallback) │ │

│ │ • Falls back to pico2wave/espeak │ │

│ │ • Converts to MP3 via FFmpeg │ │

│ │ • Returns binary audio data │ │

│ └─────────────────────────────────┘ │

│ │

│ Workers: 4 processes × 2 threads │

│ Port: 5555 │

│ Memory Limit: 1GB │

└────────────────┬───────────────────────┘

│ MP3 Binary

┌────────────────────────────────────▼───────────────────┐

│ n8n Workflow │

│ ┌──────────────┐ ┌─────────────────────────────┐ │

│ │ HTTP Request │───▶│ Telegram Node │ │

│ │ (receives MP3) │ resource: "message" │ │

│ │ binary: "data"│ │ operation: "sendDocument" │ │

│ └──────────────┘ │ binaryProperty: "data" │ │

│ │ caption: "🎧 Audio" │ │

│ └──────────────┬──────────────┘ │

└─────────────────────────────────────┼─────────────────┘

│

┌───────▼────────┐

│ Telegram

│ Audio Message │

└────────────────┘

```

### n8n Workflow Configuration

**Step 1: HTTP Request Node (Text → Audio)**

```

Node Type: HTTP Request

Method: POST

URL: http://localhost:5555/tts

Body Type: JSON

JSON Body: {{ JSON.stringify({"text": $json.text}) }}

Response: File

Output Field: data

```

**Step 2: Telegram Node (Audio → Message)**

```

Node Type: Telegram

Resource: message

Operation: sendDocument

Binary Data: ✓ Enabled

Binary Property: data

Caption: 🎧 Audio version

Chat ID: [Your Telegram Chat ID]

```

### The TTS Service Code

The core service is surprisingly simple (~400 lines of Python):

```python

# tts_service_v2.py

from flask import Flask, request, send_file

import subprocess

import hashlib

from datetime import datetime

app = Flask(__name__)

AUDIO_DIR = './audio_files'

def convert_with_gtts(text, filepath):

"""Primary method: Google TTS (free, online)"""

from gtts import gTTS

tts = gTTS(text=text, lang='en', slow=False)

tts.save(filepath)

return True

def convert_with_pico2wave(text, filepath):

"""Fallback: pico2wave (offline, good quality)"""

temp_wav = filepath.replace('.mp3', '.wav')

subprocess.check_call(['pico2wave', '-w', temp_wav, text])

subprocess.check_call(['ffmpeg', '-i', temp_wav,

'-codec:a', 'libmp3lame',

filepath])

os.remove(temp_wav)

return True

def convert_with_espeak(text, filepath):

"""Last resort: espeak (offline, basic quality)"""

subprocess.check_call(['espeak', '-w', filepath, text])

return True

@app.route('/tts', methods=['POST'])

def text_to_speech():

data = request.get_json()

text = data.get('text', '')

# Generate unique filename

text_hash = hashlib.md5(text.encode()).hexdigest()[:10]

filename = f"tts_{text_hash}_{int(datetime.now().timestamp())}.mp3"

filepath = os.path.join(AUDIO_DIR, filename)

# Try engines in order

for engine in [convert_with_gtts, convert_with_pico2wave, convert_with_espeak]:

if engine(text, filepath):

return send_file(filepath, mimetype='audio/mpeg')

return {"error": "TTS failed"}, 500

if __name__ == '__main__':

app.run(host='0.0.0.0', port=5555)

```

**Production Deployment (with Gunicorn):**

```bash

gunicorn \

--workers 4 \

--threads 2 \

--timeout 300 \

--bind 0.0.0.0:5555 \

tts_service_v2:app

```

---

## The Results: Production Metrics

After 24 hours of running in production:

**Reliability:**

- ✅ 100% uptime (with auto-restart capabilities)

- ✅ Zero crashes (resource-limited, can't run away)

- ✅ Handles 8+ concurrent workflows without issues

**Performance:**

- Average conversion time: **2-4 seconds** for 500-word articles

- Audio file size: **~30KB per minute** of speech

- Memory usage: **96MB** (well under 1GB limit)

- Disk usage: Auto-cleanup deletes files >24 hours old

**Cost:**

- API costs: **$0** (completely local)

- Server resources: Negligible (runs on same VPS as n8n)

**Workflows Enhanced:**

- 4 workflows sending both text + audio

- ~50 messages per day

- Each message = 1 text + 1 audio = 100 Telegram messages/day

- All automated, zero manual intervention

---

## The Claude Factor: AI as a Development Partner

This project fundamentally changed how I think about AI assistance. Claude wasn't just a code generator—it was more like pair programming with an expert who:

**1. Understood Context**

- Read entire log files to diagnose issues

- Remembered decisions made hours earlier

- Connected problems across different system layers

**2. Researched Solutions**

- Compared TTS engines (Piper vs Coqui vs Festival)

- Found the right Telegram API methods

- Discovered Gunicorn configuration best practices

**3. Implemented Iteratively**

- Wrote code → Tested → Fixed → Tested again

- Didn't give up when first approach failed

- Adapted strategy based on real error messages

**4. Thought About Production**

- Suggested systemd service setup without being asked

- Recommended resource limits to prevent crashes

- Created monitoring and auto-restart solutions

**The Prompt That Made It Work:**

Instead of: *"Help me add TTS to n8n"*

I used: *"I want to build a production-ready text-to-speech service for my n8n workflows that runs locally, handles concurrent requests, converts text to Telegram-compatible audio, auto-restarts on failure, and requires zero ongoing costs. Walk me through building this step by step, testing each component, and hardening it for 24/7 operation."*

The difference? **Specificity + Context + Requirements + Expected Outcome**.

---

## Key Learnings & Takeaways

### 1. Local > Cloud (Sometimes)

For my use case, a local TTS service was superior to cloud APIs:

- No costs at scale

- No API key management

- No privacy concerns

- No rate limits

- Faster (no network latency to cloud)

The trade-off? Initial setup complexity. But once built, it's more reliable.

### 2. Production ≠ Prototype

What works in testing can fail spectacularly in production:

- Manual start ≠ Auto-start on boot

- Single request ≠ Concurrent requests

- Works once ≠ Works reliably

Production means:

- Resilience (auto-restart)

- Scalability (concurrent handling)

- Monitoring (health checks)

- Resource limits (prevent crashes)

### 3. AI-Assisted Development Is Real

Claude helped me:

- Build something I'd never built before

- Debug issues in systems I don't fully understand

- Apply production best practices I didn't know existed

- Save hours (days?) of Googling and trial-and-error

But it required:

- Clear problem description

- Iterative feedback

- Willingness to test and verify

- Understanding enough to guide the process

### 4. n8n's Power Is in Composability

n8n doesn't need a TTS node. It just needs:

- HTTP Request (universal API connector)

- Binary data handling (pass files around)

- Flexible scripting (Code nodes for logic)

With those primitives, you can integrate anything. The "node" is whatever service you build.

---

## How You Can Build This Too

**The Complete Prompt for Claude (or any AI assistant):**

```

I want to build a production-ready text-to-speech service for n8n workflows:

REQUIREMENTS:

- Local processing (no cloud APIs, privacy-first)

- Free and open source

- Converts text to MP3 format

- Handles 8+ concurrent requests

- Auto-restarts if it crashes

- Auto-starts on server reboot

- Integrates with n8n via HTTP

- Sends audio to Telegram

ARCHITECTURE:

- Python Flask service

- Uses gTTS/Piper/espeak for TTS

- FFmpeg for MP3 conversion

- Gunicorn for production serving

- Systemd service for auto-start

- Resource limits to prevent issues

DELIVERABLES:

1. Complete TTS service code

2. n8n workflow configuration

3. Systemd service file

4. Installation & testing guide

5. Monitoring & maintenance scripts

CONSTRAINTS:

- Must work on Ubuntu/Debian Linux

- Self-hosted n8n in Docker

- Telegram Bot API for delivery

- No sensitive data exposure

Walk me through building this step-by-step, testing each component,

fixing issues as they arise, and creating a production-ready deployment.

```

**Estimated Time:** 4-6 hours with AI assistance (vs. days/weeks alone)

---

## The Code (Sanitized & Shareable)

I've created a complete template repository (without sensitive data) that you can use:

**What's Included:**

- ✅ TTS service Python code

- ✅ Systemd service configuration

- ✅ n8n workflow JSON templates

- ✅ Installation scripts

- ✅ Monitoring tools

- ✅ Complete documentation

**What's NOT Included (for security):**

- ❌ API keys

- ❌ Telegram bot tokens

- ❌ Chat IDs

- ❌ Server IP addresses

- ❌ Domain names

You'll need to provide your own Telegram credentials and n8n setup.

---

## Future Enhancements

Ideas I'm considering:

**1. Voice Variety**

- Multiple voices (male/female/accents)

- Voice selection via workflow parameter

- Different voices for different content types

**2. Advanced Audio**

- Speed control (0.5x - 2x)

- Background music for certain categories

- Audio effects (filters, normalization)

**3. Enhanced Integration**

- WhatsApp delivery (via WhatsApp Business API)

- Audio podcasts (RSS feed generation)

- Voice-activated playback (Alexa/Google Home)

**4. Analytics**

- Track listening patterns

- A/B test different voices

- Optimize audio length based on engagement

---

## Conclusion: The Power of Specificity

This project started with a simple desire: "I want to listen to news instead of reading it."

Through working with Claude, I learned that **the quality of AI assistance is directly proportional to the quality of your problem description.**

Vague request → Generic solution

Specific requirements + context → Tailored, production-ready implementation

The tools are here. AI can build sophisticated integrations. But it still needs:

- Clear vision (from you)

- Iterative feedback (from testing)

- Domain context (your use case)

- Production thinking (reliability requirements)

**The result?** A system that's been running flawlessly for 24 hours, delivering personalized audio news briefs to my Telegram without any manual intervention, zero API costs, and complete privacy.

And the best part? **You can build something similar in a weekend.**

---

## Resources & Links

**Technologies Used:**

- [n8n](https://n8n.io) - Workflow automation platform

- [gTTS](https://github.com/pndurette/gTTS) - Google Text-to-Speech

- [Piper](https://github.com/rhasspy/piper) - Neural TTS (offline)

- [FFmpeg](https://ffmpeg.org) - Audio processing

- [Gunicorn](https://gunicorn.org) - Python WSGI server

- [Claude Code](https://claude.ai/code) - AI development assistant

- [Telegram Bot API](https://core.telegram.org/bots/api)

**Further Reading:**

- n8n HTTP Request node documentation

- Telegram sendDocument API reference

- Systemd service management guide

- Production Python deployment best practices

---

**Have you built custom n8n integrations? What was your experience? Let me know in the comments!**

---

*Note: All sensitive information (API keys, tokens, IPs) has been removed from code examples. This is a sanitized technical walkthrough for educational purposes.*

---

## Appendix: Technical Specifications

### System Requirements

- **OS:** Ubuntu 20.04+ (or any Linux with systemd)

- **RAM:** 2GB+ available

- **CPU:** 2+ cores recommended

- **Disk:** 1GB for TTS models + audio cache

- **Python:** 3.8+

- **Docker:** 20.10+ (for n8n)

### TTS Service Specifications

- **Framework:** Flask 3.0+

- **WSGI Server:** Gunicorn 24.0+

- **Workers:** 4 processes

- **Threads per Worker:** 2

- **Timeout:** 300 seconds

- **Memory Limit:** 1GB (systemd enforced)

- **Port:** 5555

- **Audio Format:** MP3, 128kbps, 22.05kHz, mono

- **File Cleanup:** Automatic (>24 hours old)

### Monitoring & Logs

- **Systemd Logs:** `journalctl -u n8n-tts.service -f`

- **Access Logs:** `~/n8n-tts-service/gunicorn-access.log`

- **Error Logs:** `~/n8n-tts-service/gunicorn-error.log`

- **Watchdog Logs:** `~/n8n-tts-service/watchdog.log`

- **Health Check:** `curl http://localhost:5555/health`

### Performance Benchmarks

- **Single Request:** 2-4 seconds (500 words)

- **Concurrent Requests:** 8 simultaneous (tested)

- **Max Throughput:** ~120 requests/minute (theoretical)

- **Average File Size:** 30-50KB per minute of speech

- **Memory per Worker:** ~20-30MB idle, ~50MB active

Comments (0)